In a previous post, we looked at Azure Add your data (preview) that can be configured from the Azure OpenAI Chat playground. In a couple of steps, you can point to or upload files of a supported file type (PDF, Word, …) and create an Azure Cognitive Search index. That index can subsequently be queried to find relevant content to inject in the prompt of an Azure OpenAI model such as gtp-35-turbo or gpt-4. The injection is done for you by the Add your data feature!

Although this feature is fantastic and easy to configure in the portal, you probably want to use it in a custom application. So in this post, we will take a look at how to use this feature in code.

You can find a notebook with some sample code here: https://github.com/gbaeke/azure-cog-search. The code is a subset of the code used by Microsoft to create a web application from the Azure OpenAI playground. That code can be found here. Although the code is written in Python, it does not use specific OpenAI or Azure OpenAI libraries. The code uses the Azure OpenAI REST APIs only, which means you can easily replicate this in any other language or framework.

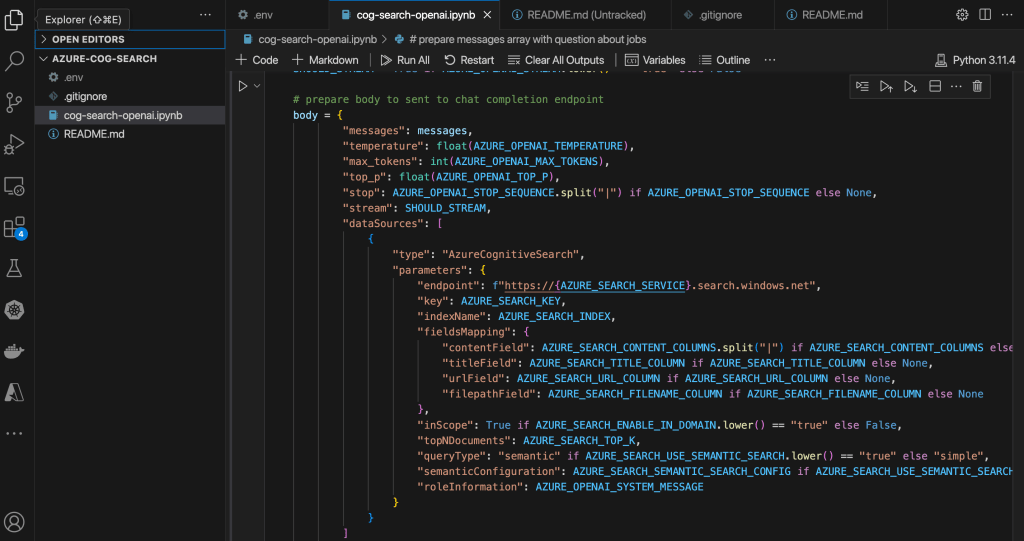

Before you run the code, make sure you clone the repo and add a .env file as described in README.md. The code itself is in a Python notebook so make sure you can run these notebooks. I use Visual Studio Code to do that:

To configure Visual Studio Code, check https://code.visualstudio.com/docs/datascience/jupyter-notebooks.

The code itself does the following:

- create Python variables from environment variables to configure both Azure Cognitive Search and Azure OpenAI. Make sure you have read the previous blog post and/or looked at the video to have both of these resources. You will need to add the short names of these services and the authentication keys to these services. The video at the end of this post discusses the options in some more detail.

- Prepare a messages array with a user question: I am an Azure architect. Is there a job for me? In the previous post, I added documents with job descriptions. You can add any documents you want and modify the user question accordingly.

- Prepare the JSON body for an HTTP POST. The JSON body will be sent to the Azure OpenAI chat completion (extensions) API and will include information about the Azure Cognitive Search data source.

- Create the endpoint URL and HTTP headers to send to the chat completion API. The headers contain the Azure OpenAI API key.

- Send the JSON body to the chat completion endpoint with the Python requests module and extract the full response (that includes citations) and the response by the OpenAI model. In this code, gpt-4 is used.

To see the response from the code, check https://github.com/gbaeke/azure-cog-search/blob/main/cog-search-openai.ipynb. If you have worked with the OpenAI APIs before, you will notice that the response in the choices field contains two messages:

- a message with the role of tool that includes content from Azure Cognitive Services including metadata like URL and file path

- a message with the role of assistant that contains the actual answer from the OpenAI model (here gpt-4)

It’s important to note that you need to use the chat completions extensions API with the correct API version of 2023-06-01-preview. This is reflected in the constructed endpoint. Also, note it’s extensions/chat/completions in the URL!

endpoint = f"https://{AZURE_OPENAI_RESOURCE}.openai.azure.com/openai/deployments/{AZURE_OPENAI_MODEL}/extensions/chat/completions?api-version={AZURE_OPENAI_PREVIEW_API_VERSION}"

If you are used to LangChain or other libraries that are supposed to make it easy to work with large language models, prompts, vector databases, etc… you know that the document queries are separated from the actual call to the LLM and that the retrieved documents are injected into the prompt. Of course, LangChain and other tools have higher-level APIs that make it easy to do in just a few lines of code.

Microsoft has gone for direct integration of Azure Cognitive Search in the Azure OpenAI APIs version 2023-06-01-preview and above. You can find the OpenAPI specification (swagger) here. One API call is all that is needed!

Note that in this code, we are not using semantic search. In the sample .env file, AZURE_SEARCH_USE_SEMANTIC_SEARCH is set to false. You cannot simply turn on that setting because it also requires turning on the feature in Azure Cognitive Search. The feature is also in preview:

To understand the differences between lexical search and semantic search, see this article. In general, semantic search should return better results when using natural language queries. By turning on the feature in Cognitive Search (Free tier) and setting AZURE_SEARCH_USE_SEMANTIC_SEARCH to true, you should be good to go.

⚠️ Note that semantic search is not the same as vector search in the context of Azure Cognitive Search. With vector search, you have to generate vectors (embeddings) using an embedding model and store these vectors in your index together with your content. Although Azure Cognitive Search supports vector search, it is not used here. It’s kind of confusing because vector search enables semantic search in a general context.

Here’s a video with more information about this blog post: